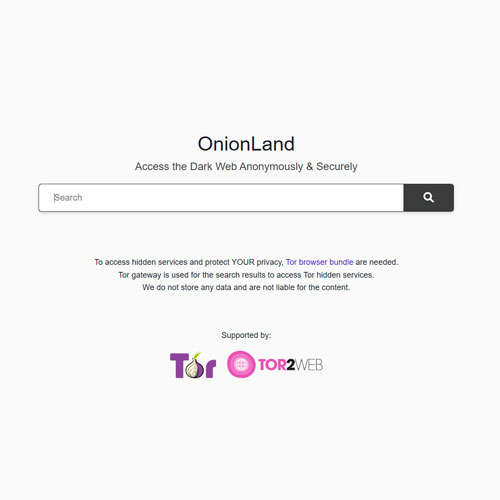

- Search engine for Tor hidden services

- Indexes .onion sites for anonymous browsing

- Enhances privacy and uncensored information access

- Used by privacy-conscious internet users

CLEARNET LINK

Onion Search Engines: Architecture, Functionality, and Implications for Privacy

Introduction

The rise of the dark web has created a unique ecosystem within the Internet, characterized by anonymized networks and encrypted communications. At the core of this ecosystem are Onion Services, primarily accessed via the Tor network. Navigating this hidden layer requires specialized tools, among which onion search engines play a critical role. These search engines allow users to find hidden services (.onion domains), a task that traditional search engines cannot accomplish due to the Tor network’s privacy-oriented architecture.

Unlike conventional search engines that index publicly accessible websites, onion search engines operate within a network designed to maximize anonymity and resist tracking. This paper examines their architecture, advantages, limitations, and impact on digital privacy.

The Concept of Onion Search Engines

– Definition and Function

An onion search engine is a system that indexes websites hosted as Tor hidden services. These services use .onion addresses, which are cryptographically generated and inaccessible outside the Tor network. Unlike surface web addresses, .onion URLs are not human-readable and change frequently to increase security.

The primary functions of onion search engines include:

- Indexing Tor-based websites while respecting privacy constraints,

- Providing searchable access to otherwise unreachable content,

- Supporting anonymity for both users and site owners.

– The Role of Anonymity

Onion search engines do not merely catalog websites; they also maintain user privacy. Unlike traditional engines, they:

- Avoid logging IP addresses,

- Use encrypted queries to prevent eavesdropping,

- Limit tracking and fingerprinting mechanisms.

This focus on anonymity aligns with the Tor network’s core philosophy: protecting both the identity of users and the location of hidden services.

Architecture and Mechanisms

– Crawling in the Tor Network

Unlike surface web crawlers, which traverse hyperlinked pages freely, Tor crawlers face unique challenges:

- Restricted access: Some hidden services implement rate-limiting or block crawlers entirely,

- Dynamic content: .onion URLs may change or rotate frequently,

- Encrypted traffic: All communications occur within Tor circuits, introducing latency.

To overcome these challenges, onion search engines typically operate through multiple Tor circuits and cache indexed pages for efficiency.

– Indexing Strategies

Onion search engines cannot rely on conventional ranking algorithms like PageRank because hidden services often lack extensive interlinking. Therefore, they use alternative strategies:

- Frequency of keywords in content,

- Popularity metrics based on anonymized traffic estimates,

- Metadata embedded in services when available.

Comparative Analysis of Prominent Onion Search Engines

Several onion search engines have emerged, each with unique features. Below is a comparative summary:

| Feature / Engine | AhmiaAhmia is a privacy-focused search engine that indexes Tor hidden services while filtering out criminal or harmful content. Created by Finnish developer Juha Nurmi, it is an open-source project that also contributes analytics and statistics to the Tor Project. Unlike many darknet search engines, Ahmia emphasizes legitimacy, transparency, and safety, making it a trusted tool for researchers, journalists, activists, and everyday users. Its official onion mirror ensures anonymous access and resilience against censorship. More | TorchTorch is one of the oldest and most well-known darknet search engines, often described as “one of the first” to index hidden services in the Tor network. With a minimalist, retro-style interface and a large database of .onion sites, it has long served as a basic navigation tool for both newcomers and experienced users. Unlike more modern alternatives, Torch does not filter results, offering broad access but also exposing users to potential risks. Its longevity and pioneering role have made it a symbolic part of darknet history. More | Not Evil More | Haystak |

|---|---|---|---|---|

| User Interface | Clean, minimalistic | Simple, text-based | Basic, minimal | Advanced, professional |

| Search Capabilities | Keyword, filtered search | Keyword only | Keyword only | Keyword, metadata search |

| Anonymity Level | High | Medium | High | High |

| Index Size | Medium (~50k sites) | Large (~100k sites) | Medium | Very Large (~1.5M sites) |

| Special Features | Public surface web results, Tor-only results | No indexing rules | Focused on privacy | Paid access for advanced search |

Interesting fact: Haystak claims to be the largest onion search engine, containing over 1.5 million hidden pages, yet it restricts full access to paying subscribers, blending anonymity with monetization.

Advantages of Onion Search Engines

– Access to Hidden Content

Users can discover content that would otherwise remain inaccessible, including:

- Privacy-focused forums and blogs,

- Secure communication platforms,

- Research material hosted anonymously.

– Enhancement of Digital Privacy

By routing queries through Tor, these search engines prevent surveillance and tracking, enabling whistleblowers, journalists, and activists to research sensitive topics securely.

– Support for Legal and Ethical Uses

While onion networks are often associated with illegal activity, they also host:

- Academic archives,

- Secure messaging tools,

- Privacy-respecting email services.

Search engines facilitate discovery of these valuable services.

Limitations and Risks

– Accuracy and Index Completeness

- Many onion services block crawlers, leading to incomplete indexing,

- URLs change frequently, resulting in outdated search results.

– Risk of Malicious Content

- Onion search engines cannot fully vet the content they index,

- Users may encounter phishing sites, malware, or scam services.

– Performance and Latency

- Crawling and search queries are slower due to the multiple Tor relay hops,

- Users must balance privacy with speed.

Future Perspectives

Onion search engines are evolving in response to privacy demands and the expanding dark web ecosystem. Emerging trends include:

- AI-assisted indexing, improving relevance despite sparse interlinking,

- Decentralized search engines, reducing reliance on centralized index servers,

- Improved metadata standards, enhancing search efficiency while preserving anonymity.

Interesting observation: Some experimental engines are exploring blockchain-based indexing to achieve tamper-resistant, fully decentralized search without compromising Tor anonymity.

Conclusions

Onion search engines represent a unique category of tools that combine anonymity, accessibility, and privacy in the hidden web environment. They enable access to information inaccessible through traditional channels, support legal privacy-enhancing activities, and challenge conventional models of web indexing.